Abstract

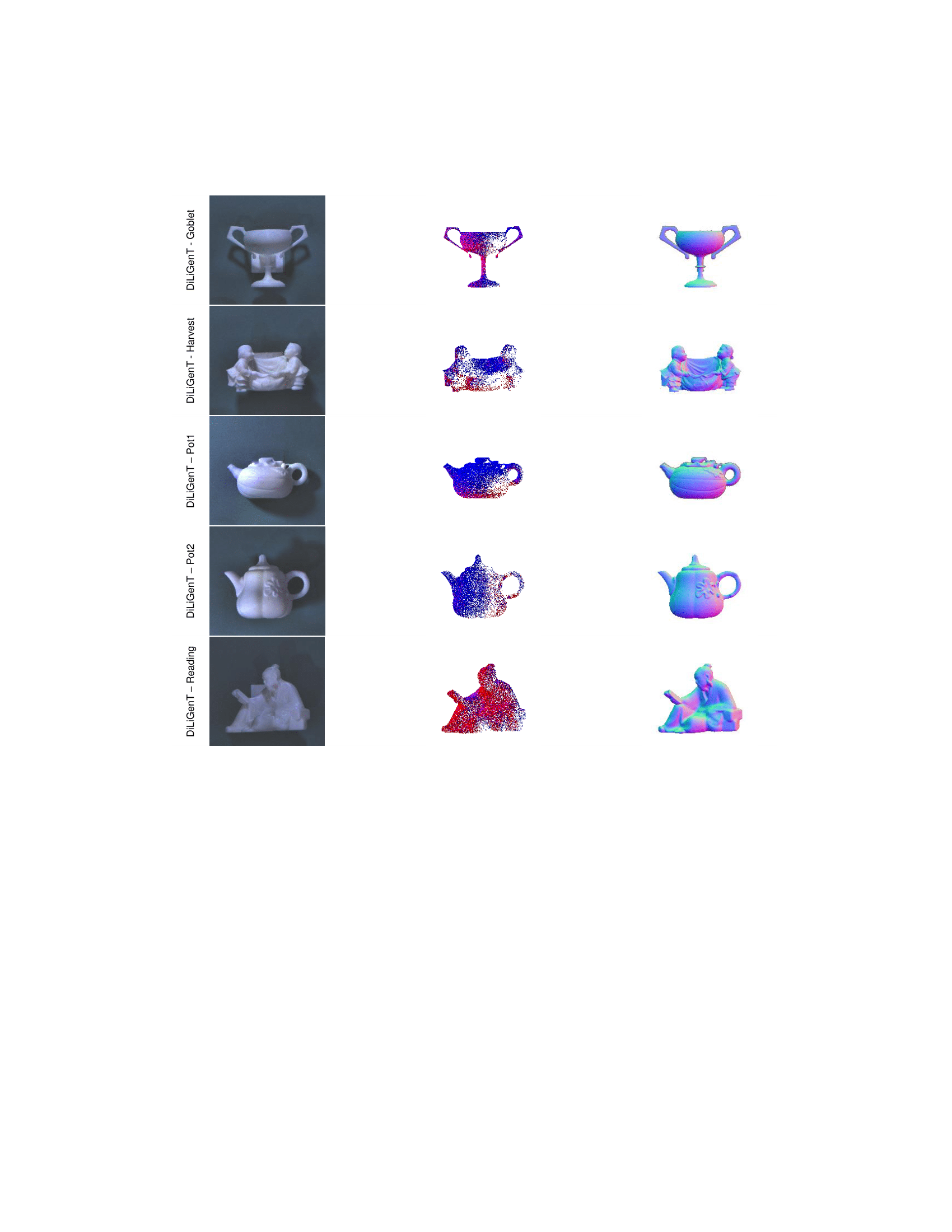

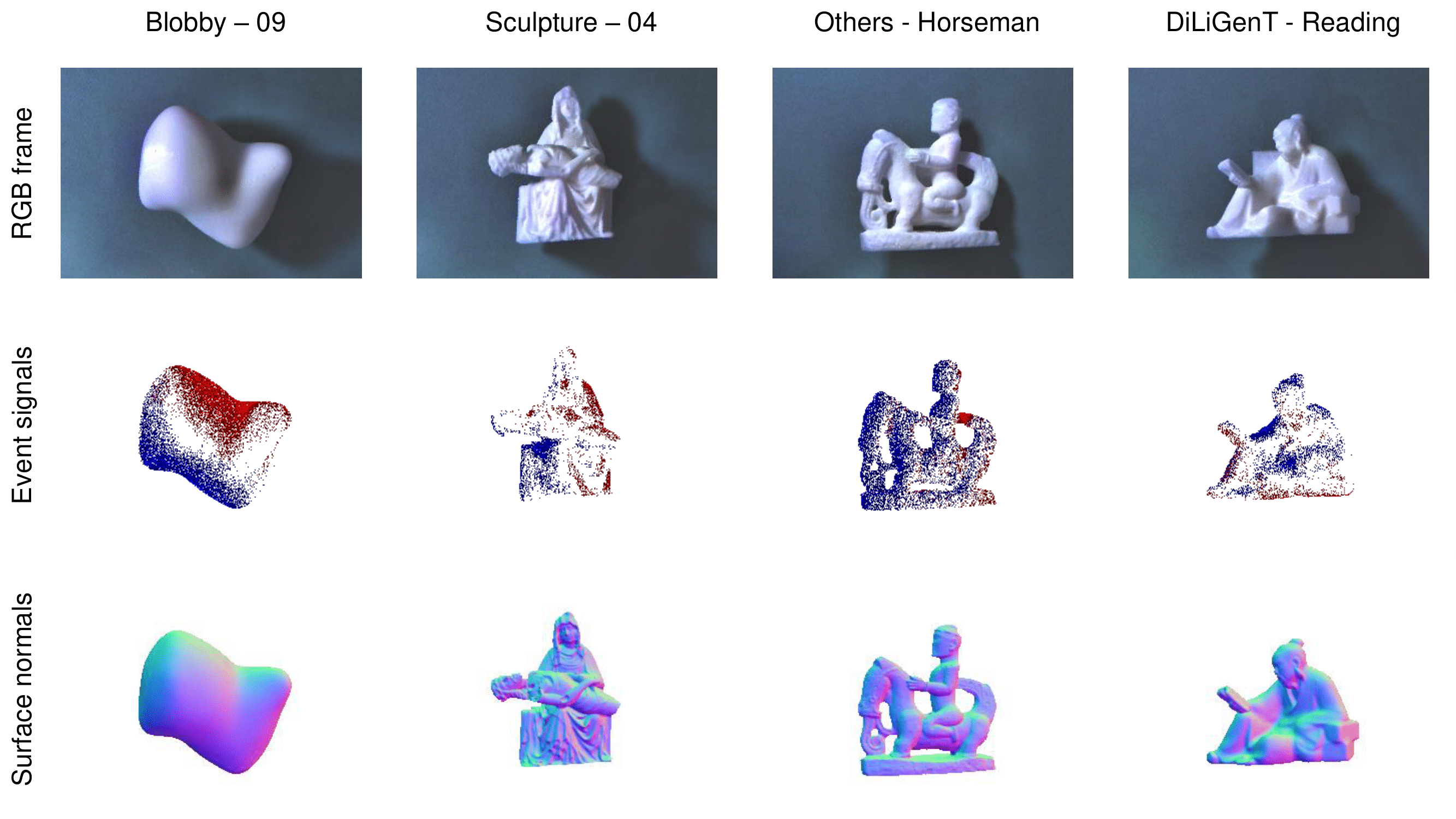

Photometric stereo methods typically rely on RGB cameras and are usually performed in a dark room to avoid ambient illumination. Ambient illumination poses a great challenge in photometric stereo due to the restricted dynamic range of the RGB cameras. To address this limitation, we present a novel method, namely Event Fusion Photometric Stereo Network (EFPS-Net), which estimates the surface normals of an object in an ambient light environment by utilizing a deep fusion of RGB and event cameras. The high dynamic range of event cameras provides a broader perspective of light representations that RGB cameras cannot provide. Specifically, we propose an event interpolation method to obtain ample light information, which enables precise estimation of the surface normals of an object. By using RGB-event fused observation maps, our EFPS-Net outperforms previous state-of-the-art methods that depend only on RGB frames, resulting in a 7.94% reduction in mean average error. In addition, we curate a novel photometric stereo dataset by capturing objects with RGB and event cameras under numerous ambient light environments.

Method

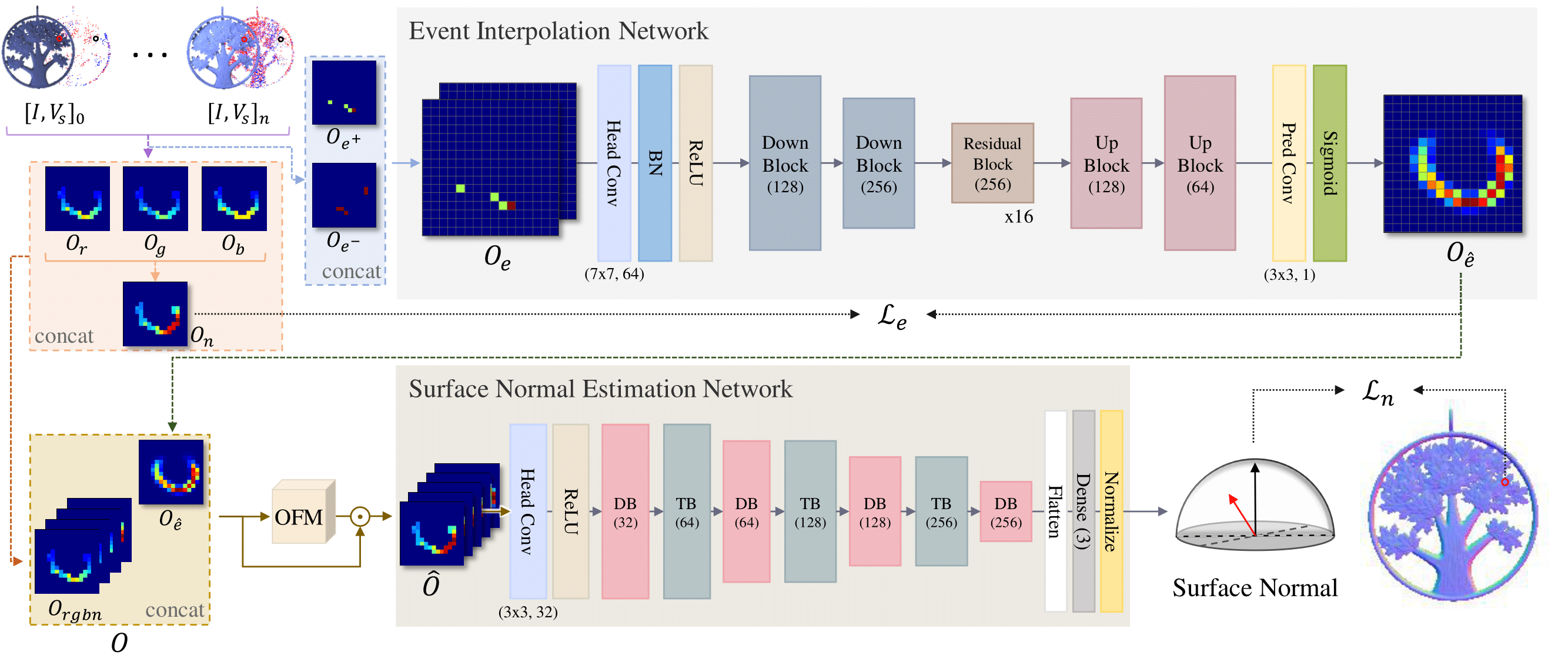

Model Overview

An overview of our proposed architecture. Built upon EFPS-Net, our model utilizes fused observation maps from RGB frames and event signals. EFPS-Net consists of two main networks and one module: (1) Event Interpolation Network (EI-Net), which converts to one dense interpolated observation map for representing more fluent light information with two sparse event observation maps, (2) Observation Fusion Module (OFM) that fuses to associate with each observation map, and (3) Surface Normal Estimation Network (SNE-Net) which utilizes fused observation maps to estimate precisely the surface normal vector.

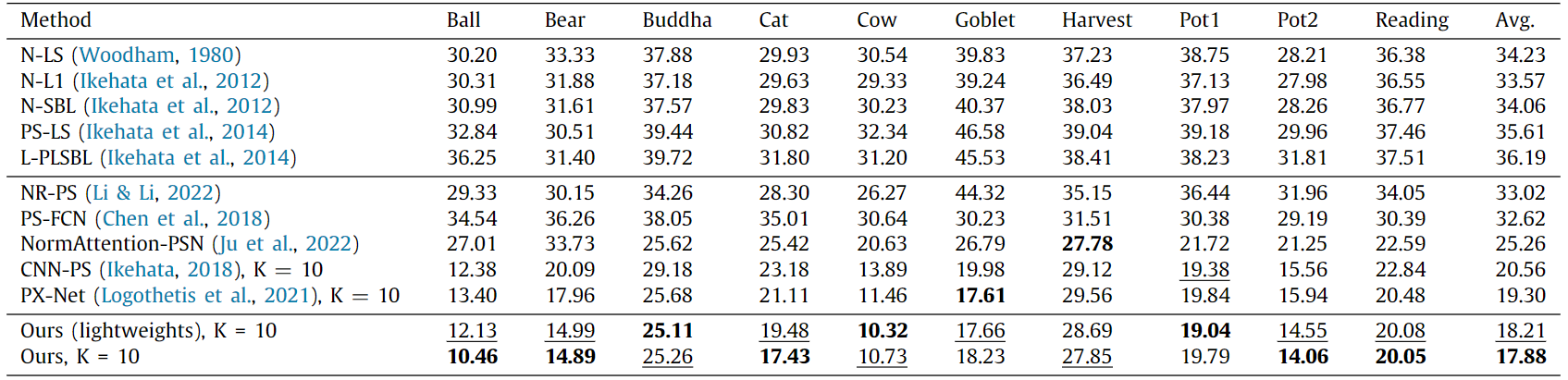

Experiments

Our method outperforms previous methods on real world RGB-event pair dataset.

The underlined values are the second best-performing values, and the bolded values are the first best-performing values.

BiBTeX

@article{Ryoo_2023,

doi = {10.1016/j.neunet.2023.08.009},

url = {https://doi.org/10.1016%2Fj.neunet.2023.08.009},

year = 2023,

month = {aug},

publisher = {Elsevier {BV}},

author = {Wonjeong Ryoo and Giljoo Nam and Jae-Sang Hyun and Sangpil Kim},

title = {Event fusion photometric stereo network},

journal = {Neural Networks}

}

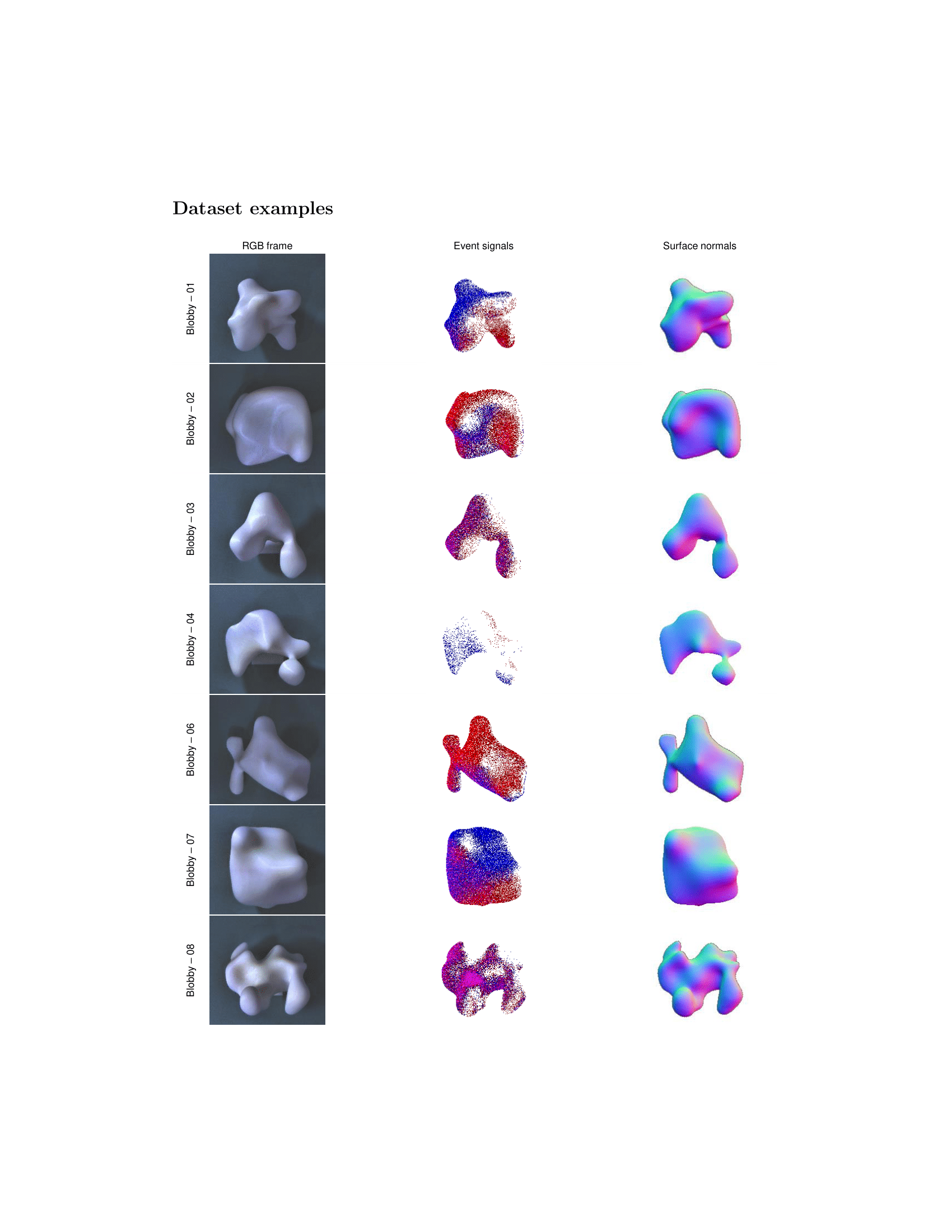

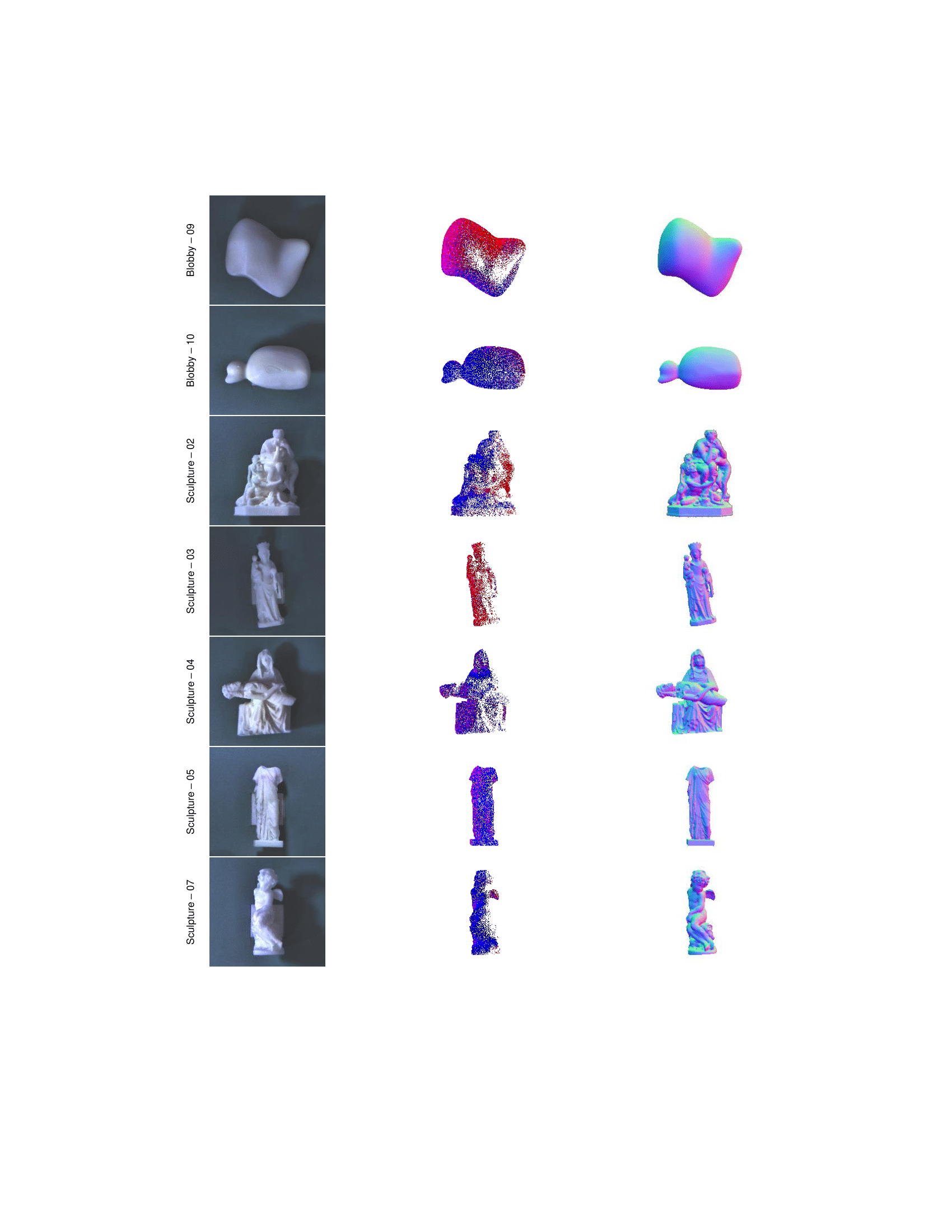

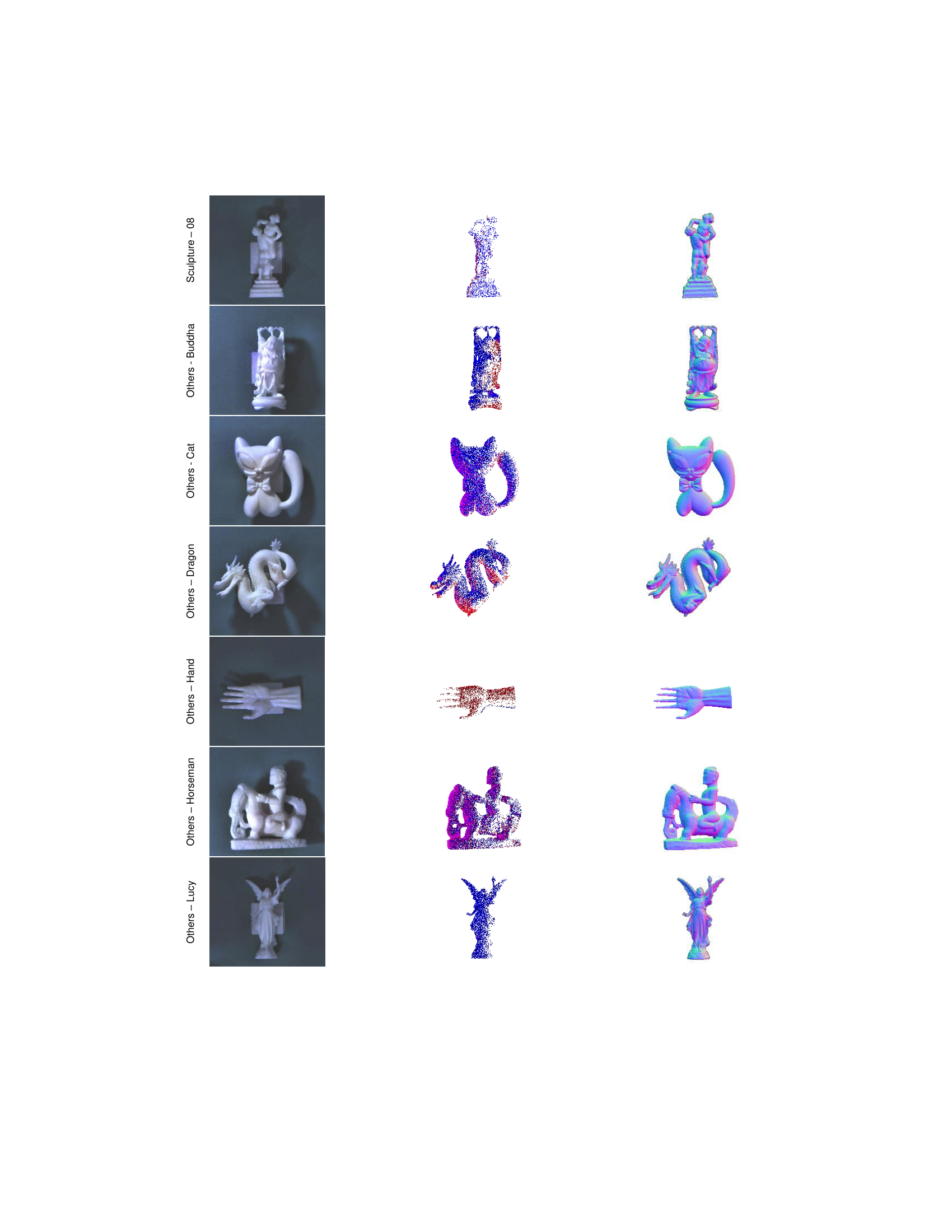

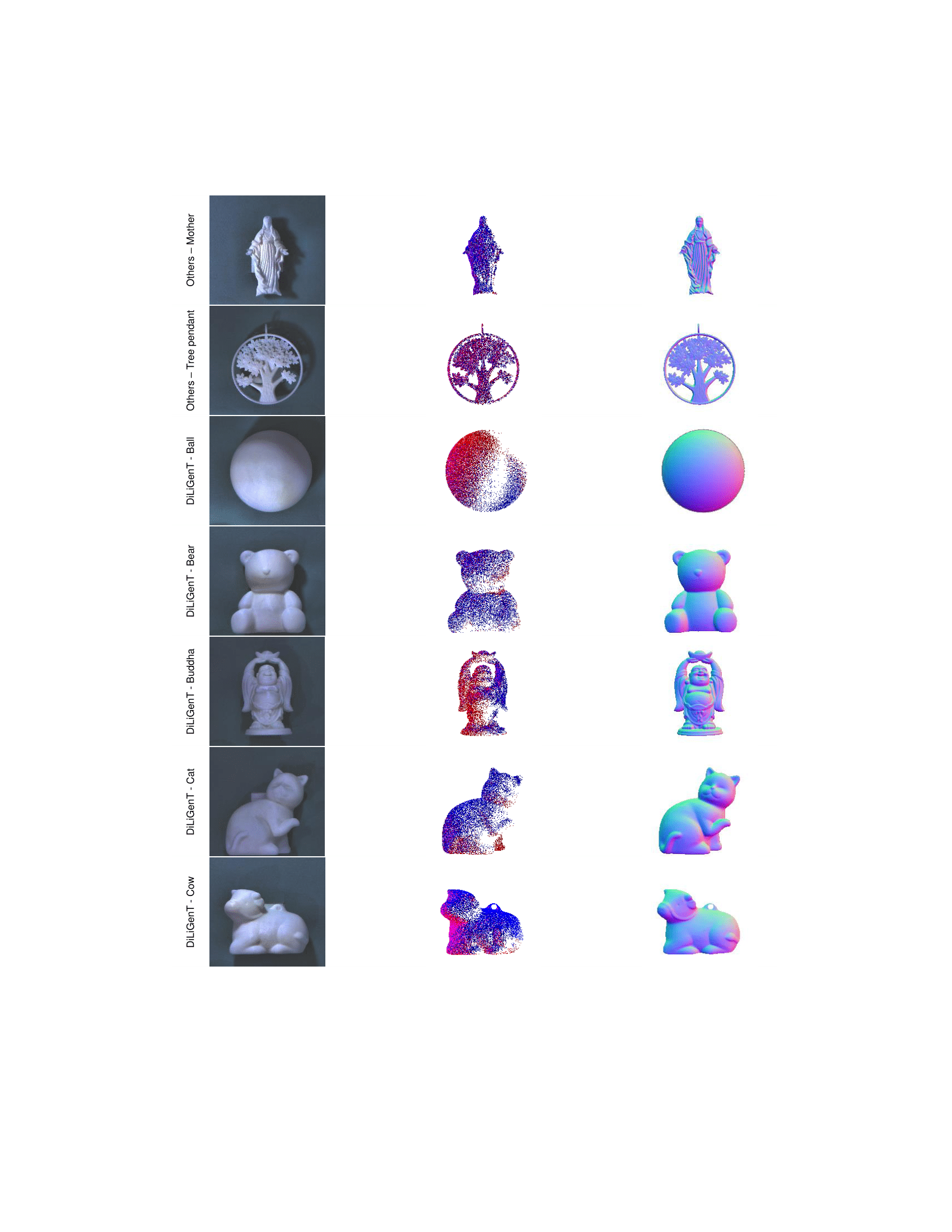

Datasets